Posts Tagged privacy

Guiding Principles are the First Necessity – #TEDatIBM

Posted by cheuer in Insytes, Leadership, Social Business, The Noble Pursuit on September 28th, 2015

Can you imagine a world where we could have such great trust in society that you no longer cared about your privacy? While some may already feel that way today, most of us could never imagine such a world, especially given what we have experienced over the past decade. At last year’s TED at IBM event, Marie Wallace addressed the challenge we face today in a brilliant speech with a very practical, evidence based solution in her talk, “Privacy by Design”. Her talk is where my belief that we should abandon hope of any true privacy was replaced by hope that there was a vital, and indeed better way.

Can you imagine a world where we could have such great trust in society that you no longer cared about your privacy? While some may already feel that way today, most of us could never imagine such a world, especially given what we have experienced over the past decade. At last year’s TED at IBM event, Marie Wallace addressed the challenge we face today in a brilliant speech with a very practical, evidence based solution in her talk, “Privacy by Design”. Her talk is where my belief that we should abandon hope of any true privacy was replaced by hope that there was a vital, and indeed better way.

In light of this year’s theme for TED at IBM being “Necessity and Invention”, I thought it important to revisit her talk and illuminate this topic from a fresh perspective. When it comes to the matter of privacy, Marie’s talk grounded me in the realization that our guiding principles are the first necessity. The theme, according to the conference web site reflects our common wisdom on the subject:

“Necessity is the mother of invention—or so we have been led to believe. We cannot help but suspect that our needs to create and to shape the world around us run much deeper than simple pragmatism.”

It’s true, it’s not pragmatism that is at the root of our inspiration to invent in my view, it is our ability to be imaginative, our ability to overcome challenges we face, our desire to not only survive, but to thrive. It is something innate in our very being. It is the unique combination of a bias towards action, a sense of greater purpose and a belief in our personal ability against all risks that drives many of us to invent. To create. To struggle against ‘the slings and arrows of outrageous fortune […] and by opposing end them’.

Unfortunately, as we have seen, particularly in the last century, absent a sense of true social responsibility, systems are designed and goods are brought to market out of a necessity that benefits a few at the expense of the many. All too often, it seems that data is being used to manipulate society broadly, and you specifically, instead of empowering us all.

Marie starts her talk with what I believe is one of the most powerful and important concepts of our modern era. It is also apparently from the Pope’s remarks last week during his US tour, a zeitgeist moment where many others are coming to this same realization. The idea that we can create a world by our own design, intentionally, not by inheritance or accident. As with the many lessons on life itself we have learned, we can choose to let it happen as it unfolds or we can direct it. So why not take an active role in shaping the world we’d like to see, and manifest it through thoughtful design. Today more than ever, this power is in our hands, not merely in the hands of a powerful few. But we must exercise it, not abdicate our rights out of a sense of helplessness. It’s a matter of intention and attention – do we want to create systems that are intentionally good for all, or to allow others to create systems that are used to manipulate us. Do we want to give attention and support to organizations and systems that are using their resources to manipulate us? I think not.

It is Marie Wallace’s central premise, that “How we approach privacy, will have the single greatest impact on [our future society].” It not only establishes the expectations of every human relative to their role in the market, but also their role in the work force. Will it continue to be based on suspicion and absent any meaningful degree of trust? Or could it instead be more trustworthy as a result? She and I agree in this case, it could be the latter, but it will take time and greater attention from us all in shaping this better future. As she said in her talk, “The reality is it doesn’t have to be like this. And I don’t think we want to live in a world where it will be like this.”

The alternative, is potentially pretty scary, to even the least educated of us on this subject, and perhaps even to the apathetic if they were to see how their data might be used to manipulate them instead of empower them. One of the examples she mentioned was relative to an organization learning from their data analytics that you had a problem with body image and this was used to sell you diet pills. Countering that approach, what if the system was open and transparent with you, and showed you the insights it generated and gave you options, not just taking advantage of your emotional state. It suggested healthier recipes, encouraged you to take a walk or to uplift your spirit and confidence. These are all possible with data analytics, but only if the controllers of the data and the insights are emboldened with positive intentions for you and society, instead of being motivated to sell you the most expensive diet pills on which they would make a profit.

Marie pointed out that even if you control your data with do not track and other mechanisms, there are still data leaks from one system to another that can provide companies with data you do not want them to have. Recently I was given a demo of a new product that did a deep personality profile on me based on my public social media presence. It developed a profile of my emotional state, and also my psychographic drivers. For many this is the holy grail of marketing, being able to tailor ads based on psychoanalysis to understand what motivates me and hit my ‘hot buttons’.

Indeed, there were a few things it suggested about my personality that were troubling, and many of which were flat our wrong because of how I have been managing my public image on Twitter proactively, and because of some recent #tweetfight I had. This is all data in the public domain, so I don’t necessarily have a problem with it, but I am concerned, as Marie is, about how others with less scrupulous intentions may use it. As a marketer, I am concerned about how a snapshot like the one it produced does not represent the whole of the person, and how even such advanced data analytics can still get it fundamentally wrong by basing such insights on a snapshot instead of the whole me and the deeper insights that would come from having a REAL Relationship with me.

Many will tell you that we need to accept all of this data is already out there and this is simply beyond our control. So the solution is to turn it off, to take ownership of our own data and to block advertising through our devices, as Apple recently enabled with the latest iOS update. But this is not a complete picture of the reality we face. As Marie points out, the current state is really a ‘privacy spaghetti’, or perhaps a ‘spaghetti monster’. We need to go to the root of the challenge and rethink our approach to this important topic from the ground up.

Which is exactly what she did in the IBM case study she shared on how they applied analytics to their vast trove of data generated from one of the oldest Enterprise Social Networks in existence. Instead of thinking of management and the employees as separate interests, she took the perspective of providing maximum value to all participants, not just the management. In taking the Privacy by Design approach, they built the foundation of their data analytics program rooted in privacy as a guiding principle that would rule all decisions and actions that followed, before writing a line of code.

In undertaking the project to better understand their employees, the IBM team embraced three core guiding principles. In my view, the pre-requisite before the invention.

- A commitment to transparency and openness.

- Embrace simplicity and ease of use.

- Focus on personal empowerment.

By making these guidelines simple to understand and visible to all, they gave trust to gain trust. By giving employees actionable insights that would help them improve themselves as a principle benefit, and enabling them to choose whether or not to share those insights with others, it changed the dynamic of the relationship for the better in more ways then one. Still, management was given access to the aggregated analysis to understand the important trends, challenges and opportunities, but did not unnecessarily reveal the private details of a uniquely identifiable individual.

This resulted in a significant upside, the sort of upside that many of us have long been trying to prove to those who would choose to exploit the data instead of protecting and empowering individuals. According to Marie, “Demonstrating openness and transparency builds trust and it allows our users to engage more openly and freely with us and share more data. And more data means more value for them and for us. It’s a virtuous circle.” In short, their approach to Privacy by Design deepened their relationships with their employees. Instead of merely providing the other stakeholders with analytics, these deeper relationships provided increased engagement with interested employees that enabled the accomplishment of even more valuable outcomes.

To my original question – Can you imagine a world where we could have such great trust in society that you no longer cared about your privacy? I’d like to believe we could, by facilitating such transparency that we all knew what was available and where we had control over how it was being used. I’d like to intentionally design such a world, perhaps with you through my new community movement “We Are the Solution“. But in order to abandon my hope or interest in defending privacy, I first need greater confidence that unscrupulous people and companies who value profit above people are not able to use my data, or any data, in a manipulative way.

As you are probably keenly aware, this is not the world in which we live today. But it is a world we could design and build together if we choose to do so. As Scott Swhwaitzberg posits in “Trust me… there’s an app for that” the combination of technology and transparency can make this world a reality. But first, we need to ensure that no corporate desire trumps the guiding principles of our shared values across society. This is why the first necessity is to embrace a common set of guiding principles. This is why we must support organizations who share and operate under such values with our hands, hearts and wallets, and deny such support to those who don’t.

Watch Marie Wallace’s talk, “Privacy by Design” on YouTube, and visit the TED at IBM web site to learn more about the upcoming event.

DISCLOSURE:

Like many of you, I love the inspiration and big thinking that comes out of TED. It’s why I helped to produce BIL back in 2008 and why I spoke at BIL again in 2014. It’s a part of who I am, which is why I attend TEDx whenever I can (and hope to speak at a few next year) and why I was so grateful to be invited to TED at IBM last year and will be attending again on October 15, 2015 as their guest. This post, while not required of me in exchange for my invitation, was written as a part of the IBM New Way to Engage futurist program of which I am a part and is being promoted by IBM through that program. While they are paying to promote it in social media, the words above are completely my own, except as otherwise quoted, and do not reflect the position of IBM.

Facebook Not Understanding Opt-In is Like Universal Missing Digital Music

Posted by cheuer in SocialMedia, Web2.0 on December 5th, 2007

There are a lot of smart people who have weighed in on the Facebook Beacon issue and Mark Zuckerberg’s apology today, and while I have not had time to read it all, it pretty much equates to a lot of bad PR for them. Then again, as they say in show business, any PR is good PR. This just furthers the broad, mainstream awareness of Facebook as a Social Networking Platform.

There are a lot of smart people who have weighed in on the Facebook Beacon issue and Mark Zuckerberg’s apology today, and while I have not had time to read it all, it pretty much equates to a lot of bad PR for them. Then again, as they say in show business, any PR is good PR. This just furthers the broad, mainstream awareness of Facebook as a Social Networking Platform.

In the end, my take is that this is actually great for Facebook despite some of my scathing commentary below.

As Dave McClure points out today, the majority of FaceBook users who are uninformed consumers will look at this, and FaceBook’s response without the cynicism that all of us longtime observers have and without the scorn for the obviousness of their mistake. While I respect Dave, and agree with many of his points, he misses out on the fact that having FaceBook know everything I do, nearly everywhere on the Web was not part of the Faustian bargain we made when we signed up. Wasn’t it somewhere around 1998 when EVERYONE realized the only way to gain trust with users was through opt-in policies? In reading Mark Zuckerberg’s well crafted statement produced by an army of PR professionals and lawyers, I stopped in my tracks when I read:

The problem with our initial approach of making it an opt-out system instead of opt-in was that if someone forgot to decline to share something, Beacon still went ahead and shared it with their friends.

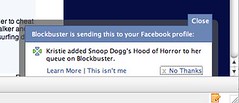

Well yes Mark, that is the problem with opt-in systems, it does things that people don’t necessarily want it to do. How can someone forget to decline to share something, when they aren’t even aware the system is really sharing it in the first place? Kristie and I found out about this firsthand when managing our queue on Blockbuster. She didn’t notice the small little window that popped up in the lower right corner, I merely remarked – “wait, what was that?” Only on the 3rd instance did we actually see what it was doing and get there in time to “remember that we wanted to decline sharing something”.

Despite the minimal macro effects this has on the company, I have a ton of disdain and scorn for Mark agreeing to make this clearly moronic statement as part of his broader post…. but wait, there’s more. The very next line in his statement is even more offensive (and demeaning to his colleagues who tried to do the right thing):

It took us too long after people started contacting us to change the product so that users had to explicitly approve what they wanted to share.

What about all the smart people on their staff who realized this was silly? What about all the people who complained about the early spamming incidents of lead FB App developers who automatically sent invites to all of a person’s friends when adding an app? What about all the partners who agreed to implement this technology, and the smart people on those teams who most likely asked the same question all of us have been asking? Even now in reading this, I am concerned that they still have not understood how far from reality their philosophical understanding of privacy issues in social networks really is. I mean, doesn’t FaceBook, as a general principle, confine what is being shared only to those we agree to share this information with, even giving us the option of only sharing a “limited profile” when adding them?

Hmmmm – I don’t know Mark, so I can’t make a reasonable judgement on him personally, but I have had the pleasure of speaking with Dave Morin, their platform architect. I have to say, I expected better of him then this – he really seems to get it – though like Mark, I believe they are still young entrepreneurs and are perhaps not seasoned enough to be running the nation state that is FaceBook. Which is why perhaps these moves are indicative of the fact that they are not really running the show, or that the pressure has them relying on more experienced executives and the investors for advice instead of their own instincts. Perhaps we just need to form an advisory board of straight shooters who they could really rely on for hearing it straight, to support them as individuals and leaders of the FaceBook Nation.

A good point was made by Tom Foremski last week at the Something Simpler Systems conversation on Mining the Social Graph that I helped produce with my partner Ted Shelton. Tom said, paraphrasing “What if trying to monetize these social environments merely pollutes them and destroys the real value they hold for the people who are there. They aren’t participating in FaceBook to make money, or to make money for others, they are there because they want to be social!” His post on MSFT: Setting Up Facebook For Failure, is a must read on this subject. In looking at the FaceBook Beacon fracas, I have to wonder who is really in charge, and if the eagerness to prove monetization means that the evil greedy stupid marketers are the ones really in charge.

Who is FaceBook’s Dick Cheney anyway? While Mark takes the slings and arrows of outrageous fortune, someone behind the scenes is sitting on their private jet saying “Oh well, that didn’t work. How did the market do today?”, all the while leaving Mark to take the fall with silly statements like this one today. But wait, it gets worse, and now I wonder if he has some septugenarian crisis management expert writing his statements instead of a digital native:

Last week we changed Beacon to be an opt-in system, and today we’re releasing a privacy control to turn off Beacon completely. You can find it here. If you select that you don’t want to share some Beacon actions or if you turn off Beacon, then Facebook won’t store those actions even when partners send them to Facebook.

I know everyone else out there has made this point ad nauseum, but really, how can you dare claim it was changed to an opt-in system when your partners are still collecting data on our use of their sites and sending it to you? It seems as if whoever is really in charge over there is overly tempted by the apples of user behaviour on the tree of knowledge that they not only took the first bite, they just cant stop themselves… let’s hope this sin is not infectious.

Let’s be clear about this for everyday, non technical folks. To say it is an opt-in system means that it should be off completely until you opt for it to be turned on at all. This version of what they call opt-in is a mutant merman, like the one that saves Lois on a recent Family Guy episode. Its completely upside down and misses the point. This is why I think it is the equivalent of Universal Music’s CEO Doug Morris claiming there “wasn’t a thing he or anyone could have done differently“.

For Mark to make a claim like the one above, calling the ‘improved’ beacon program opt-in, demonstrates a cluelessness of gigantic proportions. Of course, the bigger problem I see here is that Mark is being forced to make such statements, not realizing that in today’s world, regardless of the multi-billion dollar corporate interests at stake, we are, as the original users of The Well stated so eloquently, “You Own Your Own Words“. So take responsibility and stand up for what is right Mark, don’t let them play you like this…

I want to thank you for your feedback on Beacon over the past several weeks and hope that this new privacy control addresses any remaining issues we’ve heard about from you.

Of course it doesn’t Mark, and you are seemingly smart enough to know this. I thought the practice of SPIN was dying, particularly with your generation which has even less tolerance for BS then the rest of us. Please stop trying to defend this egregious invasion of privacy and do the right thing. While I am sure that the mainstream parts of society and the uninformed won’t care about, or remember this a few months from now, WE won’t forget. So, let’s chalk it up to being an experiment gone awry, and rather than continuing to try to defend it, work with US to openly craft a better balance of privacy, social signaling and monetization opportunities. If we work together, I am sure we can come up with something that could really work for us instead of against us.

Doc Searls really drove home an important understanding about the bigger issues at play here in his series of posts about Making Rules. I may be interpreting it slightly differently, but these eloquent series of posts support what I have been thinking about regarding Social Media’s ability to tear down the walls that make an individual’s interaction with a company an us vs. them proposition. This isn’t about the Executive Team at Facebook making decisions separately of its users, though they certainly have the right to do so as they are the one’s in charge. It is my opinion, that in the modern market, particularly for a social media company, it is imperative that the leadership thinks about it from the perspective of all of us working and creating our online communities together. As my wife Kristie Wells reminded me again, if it weren’t for our contributions, there would be no value in the company – we are absolutely the co-creator of this company and should be respected as such.

Of course, as Dave McClure and many others will tell me, if I don’t like it, I can just leave – just as many Americans said about what they would do if Bush was re-elected President. Like most of my friends (who still live in San Francisco 3+ years later), I would rather stay and work to create change from the inside, not by leaving the FaceBook Nation, but by being a part of the conversation and contributing towards positive change…

Technorati Tags: facebook, facebookbeacon, optin, clueless, markzuckerberg, privacyinvasion, facebooknation

Powered by ScribeFire.